Week 6: Text Mining

BIOSTAT620: Introduction to Health Data Science

Acknowledgment

These slides were originally developed by Emil Hvitfeldt and modified by Dylan Cable, George G. Vega Yon, and Kelly Street.

Plan for the week

- We will try to turn text into numbers

- Then use tidy principals to explore those numbers

Why tidytext?

Works seemlessly with ggplot2, dplyr and tidyr.

Alternatives:

R: quanteda, tm, koRpus

Python: nltk, Spacy, gensim

Alice’s Adventures in Wonderland

Download the alice dataset from https://github.com/dmcable/BIOSTAT620W26/blob/main/data/text/alice.rds)

# A tibble: 3,351 × 3

text chapter chapter_name

<chr> <int> <chr>

1 "CHAPTER I." 1 CHAPTER I.

2 "Down the Rabbit-Hole" 1 CHAPTER I.

3 "" 1 CHAPTER I.

4 "" 1 CHAPTER I.

5 "Alice was beginning to get very tired of sitting by he… 1 CHAPTER I.

6 "bank, and of having nothing to do: once or twice she h… 1 CHAPTER I.

7 "the book her sister was reading, but it had no picture… 1 CHAPTER I.

8 "conversations in it, “and what is the use of a book,” … 1 CHAPTER I.

9 "“without pictures or conversations?”" 1 CHAPTER I.

10 "" 1 CHAPTER I.

# ℹ 3,341 more rowsTokenizing

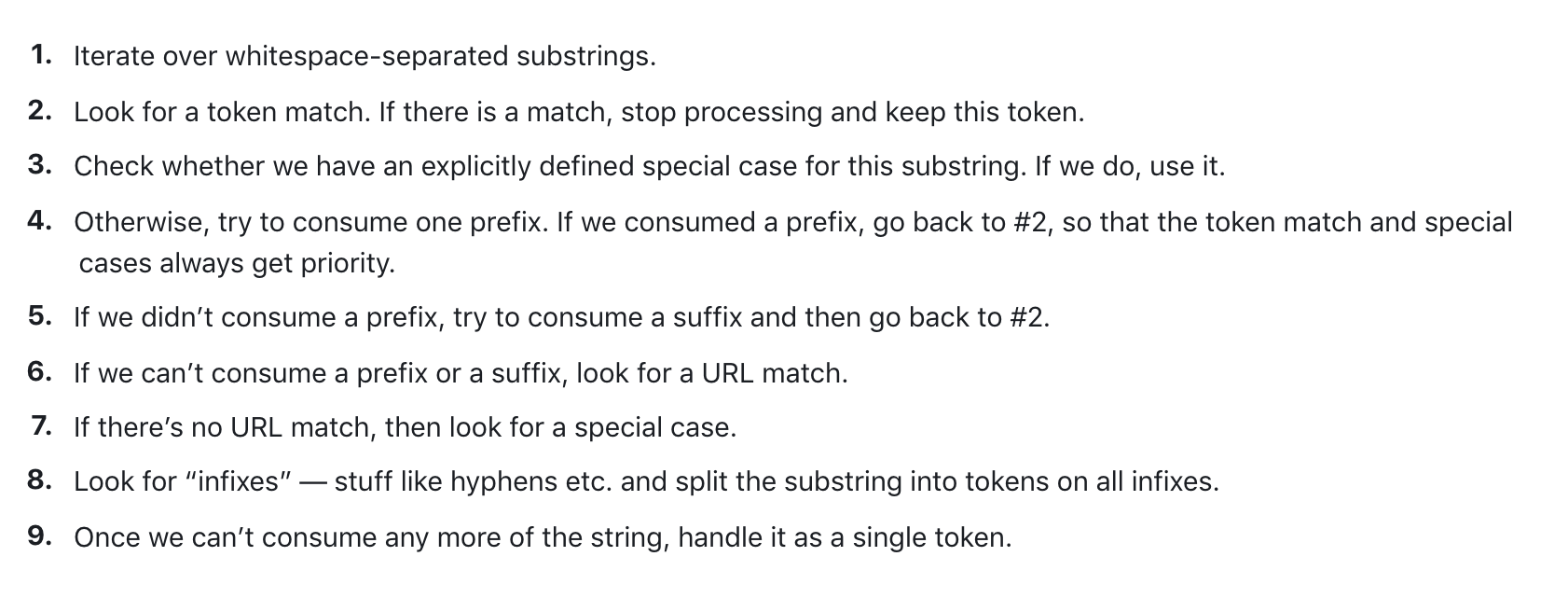

Turning text into smaller units

In English:

- split by spaces

- more advanced algorithms

Spacy tokenizer

Turning the data into a tidy format

# A tibble: 26,687 × 3

chapter chapter_name token

<int> <chr> <chr>

1 1 CHAPTER I. chapter

2 1 CHAPTER I. i

3 1 CHAPTER I. down

4 1 CHAPTER I. the

5 1 CHAPTER I. rabbit

6 1 CHAPTER I. hole

7 1 CHAPTER I. alice

8 1 CHAPTER I. was

9 1 CHAPTER I. beginning

10 1 CHAPTER I. to

# ℹ 26,677 more rowsWords as a unit

Now that we have words as the observation unit we can use the dplyr toolbox.

Using dplyr verbs

# A tibble: 26,687 × 3

chapter chapter_name token

<int> <chr> <chr>

1 1 CHAPTER I. chapter

2 1 CHAPTER I. i

3 1 CHAPTER I. down

4 1 CHAPTER I. the

5 1 CHAPTER I. rabbit

6 1 CHAPTER I. hole

7 1 CHAPTER I. alice

8 1 CHAPTER I. was

9 1 CHAPTER I. beginning

10 1 CHAPTER I. to

# ℹ 26,677 more rowsUsing dplyr verbs

Using dplyr verbs

Using dplyr verbs

Using dplyr verbs

library(dplyr)

alice |>

unnest_tokens(token, text) |>

group_by(chapter) |>

count(token) |>

top_n(10, n)# A tibble: 122 × 3

# Groups: chapter [12]

chapter token n

<int> <chr> <int>

1 1 a 52

2 1 alice 27

3 1 and 65

4 1 i 30

5 1 it 62

6 1 of 43

7 1 she 79

8 1 the 92

9 1 to 75

10 1 was 52

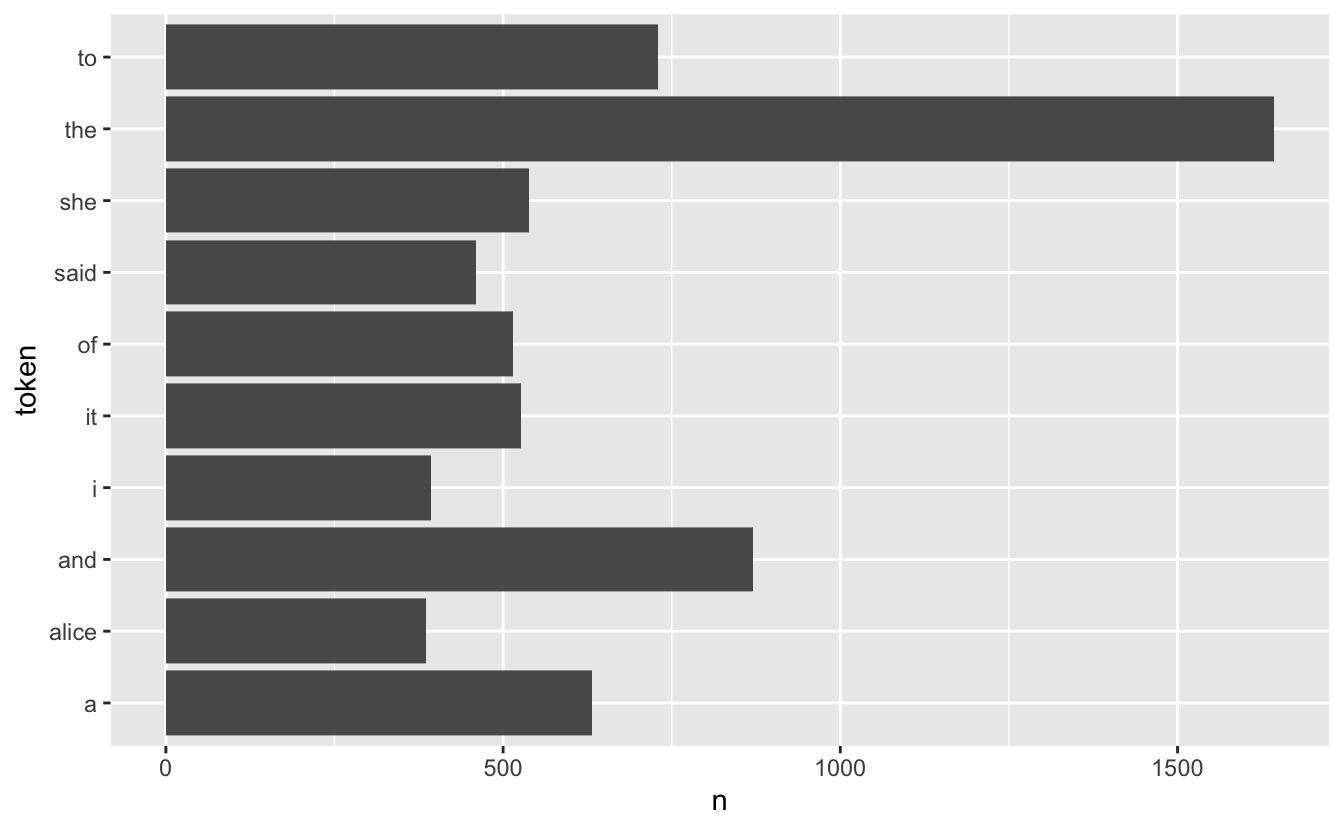

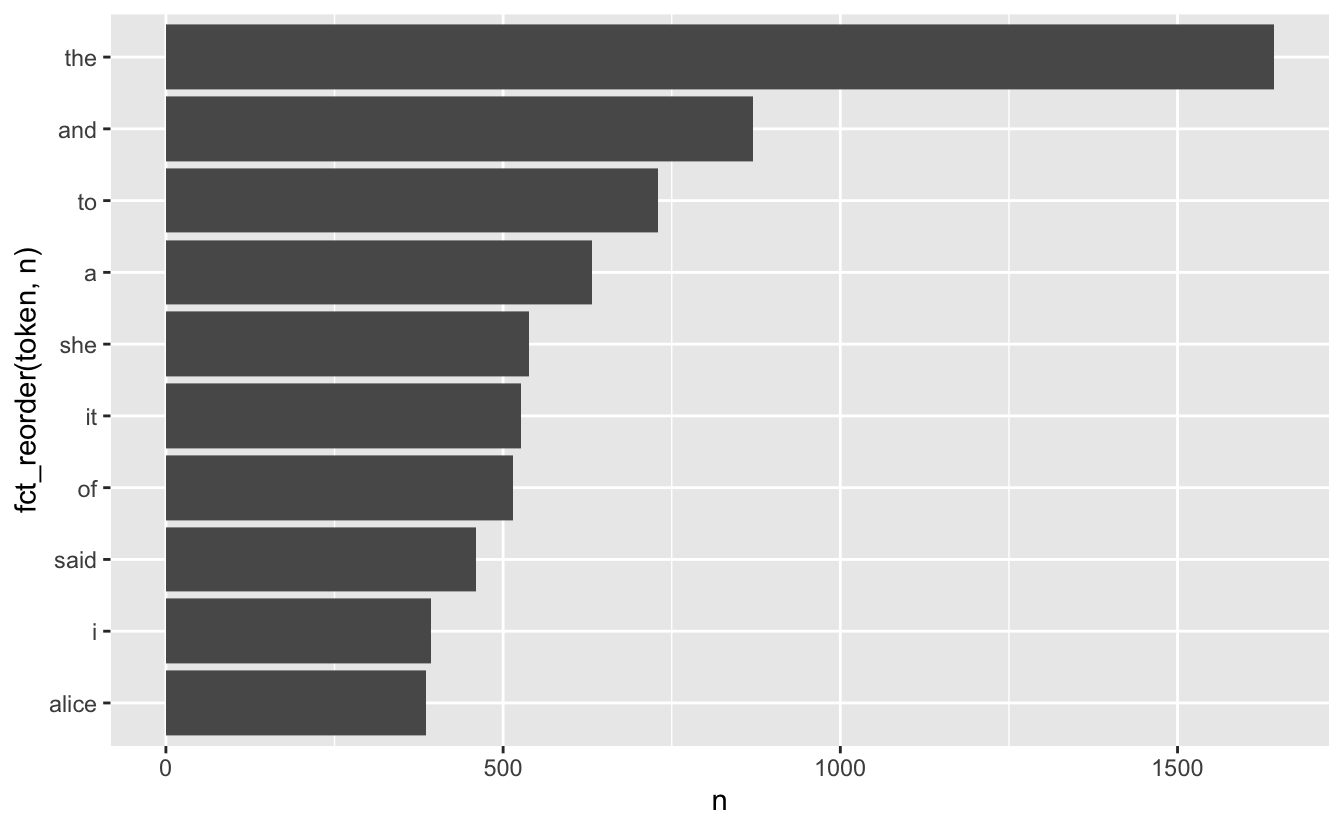

# ℹ 112 more rowsdplyr verbs and ggplot2

dplyr verbs and ggplot2

Stop words

A lot of the words don’t tell us very much. Words such as “the”, “and”, “at” and “for” appear a lot in English text but doesn’t add much to the context.

Words such as these are called stop words

For more information about differences in stop words and when to remove them read this chapter: https://smltar.com/stopwords

Stop words in tidytext

tidytext comes with a built-in data.frame of stop words

Stop word lexicons

snowball stopwords

[1] "i" "me" "my" "myself" "we"

[6] "our" "ours" "ourselves" "you" "your"

[11] "yours" "yourself" "yourselves" "he" "him"

[16] "his" "himself" "she" "her" "hers"

[21] "herself" "it" "its" "itself" "they"

[26] "them" "their" "theirs" "themselves" "what"

[31] "which" "who" "whom" "this" "that"

[36] "these" "those" "am" "is" "are"

[41] "was" "were" "be" "been" "being"

[46] "have" "has" "had" "having" "do"

[51] "does" "did" "doing" "would" "should"

[56] "could" "ought" "i'm" "you're" "he's"

[61] "she's" "it's" "we're" "they're" "i've"

[66] "you've" "we've" "they've" "i'd" "you'd"

[71] "he'd" "she'd" "we'd" "they'd" "i'll"

[76] "you'll" "he'll" "she'll" "we'll" "they'll"

[81] "isn't" "aren't" "wasn't" "weren't" "hasn't"

[86] "haven't" "hadn't" "doesn't" "don't" "didn't"

[91] "won't" "wouldn't" "shan't" "shouldn't" "can't"

[96] "cannot" "couldn't" "mustn't" "let's" "that's"

[101] "who's" "what's" "here's" "there's" "when's"

[106] "where's" "why's" "how's" "a" "an"

[111] "the" "and" "but" "if" "or"

[116] "because" "as" "until" "while" "of"

[121] "at" "by" "for" "with" "about"

[126] "against" "between" "into" "through" "during"

[131] "before" "after" "above" "below" "to"

[136] "from" "up" "down" "in" "out"

[141] "on" "off" "over" "under" "again"

[146] "further" "then" "once" "here" "there"

[151] "when" "where" "why" "how" "all"

[156] "any" "both" "each" "few" "more"

[161] "most" "other" "some" "such" "no"

[166] "nor" "not" "only" "own" "same"

[171] "so" "than" "too" "very" Duplicated stopwords

down would a about above

4 4 3 3 3

after again against all an

3 3 3 3 3

and any are as at

3 3 3 3 3

be because been before being

3 3 3 3 3

between both but by cannot

3 3 3 3 3

could did do does during

3 3 3 3 3

each few for from further

3 3 3 3 3

had has have having he

3 3 3 3 3

her here herself high him

3 3 3 3 3

himself his how i if

3 3 3 3 3

in into is it its

3 3 3 3 3

itself me more most my

3 3 3 3 3

myself new no not of

3 3 3 3 3

off on once only or

3 3 3 3 3

other our out over right

3 3 3 3 3

same she should some still

3 3 3 3 3

such than that the their

3 3 3 3 3

them then there these they

3 3 3 3 3

this those through to too

3 3 3 3 3

under until up very was

3 3 3 3 3

we were what when where

3 3 3 3 3

which while who why with

3 3 3 3 3

you your yours across almost

3 3 3 2 2

alone along already also although

2 2 2 2 2

always am among another anybody

2 2 2 2 2

anyone anything anywhere aren't around

2 2 2 2 2

ask asking away became become

2 2 2 2 2

becomes behind below best better

2 2 2 2 2

came can can't certain certainly

2 2 2 2 2

clearly come couldn't didn't different

2 2 2 2 2

doesn't doing don't done either

2 2 2 2 2

enough even ever every everybody

2 2 2 2 2

everyone everything everywhere far first

2 2 2 2 2

four get gets given gives

2 2 2 2 2

go going got hadn't hasn't

2 2 2 2 2

haven't he's here's hers however

2 2 2 2 2

i'd i'll i'm i've isn't

2 2 2 2 2

it's just keep keeps know

2 2 2 2 2

known knows last later least

2 2 2 2 2

less let let's like likely

2 2 2 2 2

many may might mostly much

2 2 2 2 2

must necessary need needs never

2 2 2 2 2

next nobody non noone nor

2 2 2 2 2

nothing now nowhere often old

2 2 2 2 2

one others ought ours ourselves

2 2 2 2 2

own per perhaps possible quite

2 2 2 2 2

rather really said saw say

2 2 2 2 2

says second see seem seemed

2 2 2 2 2

seeming seems several shall shouldn't

2 2 2 2 2

since so somebody someone something

2 2 2 2 2

somewhere sure take taken that's

2 2 2 2 2

theirs themselves there's therefore they'd

2 2 2 2 2

they'll they're they've think though

2 2 2 2 2

three thus together took toward

2 2 2 2 2

two upon us use used

2 2 2 2 2

uses want wants wasn't way

2 2 2 2 2

we'd we'll we're we've well

2 2 2 2 2

went weren't what's where's whether

2 2 2 2 2

who's whole whom whose will

2 2 2 2 2

within without won't wouldn't yet

2 2 2 2 2

you'd you'll you're you've yourself

2 2 2 2 2

yourselves a's able according accordingly

2 1 1 1 1

actually afterwards ain't allow allows

1 1 1 1 1

amongst anyhow anyway anyways apart

1 1 1 1 1

appear appreciate appropriate area areas

1 1 1 1 1

aside asked asks associated available

1 1 1 1 1

awfully b back backed backing

1 1 1 1 1

backs becoming beforehand began beings

1 1 1 1 1

believe beside besides beyond big

1 1 1 1 1

brief c c'mon c's cant

1 1 1 1 1

case cases cause causes changes

1 1 1 1 1

clear co com comes concerning

1 1 1 1 1

consequently consider considering contain containing

1 1 1 1 1

contains corresponding course currently d

1 1 1 1 1

definitely described despite differ differently

1 1 1 1 1

downed downing downs downwards e

1 1 1 1 1

early edu eg eight else

1 1 1 1 1

elsewhere end ended ending ends

1 1 1 1 1

entirely especially et etc evenly

1 1 1 1 1

ex exactly example except f

1 1 1 1 1

face faces fact facts felt

1 1 1 1 1

fifth find finds five followed

1 1 1 1 1

following follows former formerly forth

1 1 1 1 1

full fully furthered furthering furthermore

1 1 1 1 1

furthers g gave general generally

1 1 1 1 1

getting give goes gone good

1 1 1 1 1

goods gotten great greater greatest

1 1 1 1 1

greetings group grouped grouping groups

1 1 1 1 1

h happens hardly he'd he'll

1 1 1 1 1

hello help hence hereafter hereby

1 1 1 1 1

herein hereupon hi higher highest

1 1 1 1 1

hither hopefully how's howbeit ie

1 1 1 1 1

ignored immediate important inasmuch inc

1 1 1 1 1

indeed indicate indicated indicates inner

1 1 1 1 1

insofar instead interest interested interesting

1 1 1 1 1

interests inward it'd it'll j

1 1 1 1 1

k kept kind knew l

1 1 1 1 1

large largely lately latest latter

1 1 1 1 1

latterly lest lets liked little

1 1 1 1 1

long longer longest look looking

1 1 1 1 1

looks ltd m made mainly

1 1 1 1 1

make making man maybe mean

1 1 1 1 1

meanwhile member members men merely

1 1 1 1 1

moreover mr mrs mustn't n

1 1 1 1 1

name namely nd near nearly

1 1 1 1 1

needed needing neither nevertheless newer

1 1 1 1 1

newest nine none normally novel

1 1 1 1 1

number numbers o obviously oh

1 1 1 1 1

ok okay older oldest ones

1 1 1 1 1

onto open opened opening opens

1 1 1 1 1

order ordered ordering orders otherwise

1 1 1 1 1

outside overall p part parted

1 1 1 1 1

particular particularly parting parts place

1 1 1 1 1

placed places please plus point

1 1 1 1 1

pointed pointing points present presented

1 1 1 1 1

presenting presents presumably probably problem

1 1 1 1 1

problems provides put puts q

1 1 1 1 1

que qv r rd re

1 1 1 1 1

reasonably regarding regardless regards relatively

1 1 1 1 1

respectively room rooms s saying

1 1 1 1 1

secondly seconds seeing seen sees

1 1 1 1 1

self selves sensible sent serious

1 1 1 1 1

seriously seven shan't she'd she'll

1 1 1 1 1

she's show showed showing shows

1 1 1 1 1

side sides six small smaller

1 1 1 1 1

smallest somehow sometime sometimes somewhat

1 1 1 1 1

soon sorry specified specify specifying

1 1 1 1 1

state states sub sup t

1 1 1 1 1

t's tell tends th thank

1 1 1 1 1

thanks thanx thats thence thereafter

1 1 1 1 1

thereby therein theres thereupon thing

1 1 1 1 1

things thinks third thorough thoroughly

1 1 1 1 1

thought thoughts throughout thru today

1 1 1 1 1

towards tried tries truly try

1 1 1 1 1

trying turn turned turning turns

1 1 1 1 1

twice u un unfortunately unless

1 1 1 1 1

unlikely unto useful using usually

1 1 1 1 1

uucp v value various via

1 1 1 1 1

viz vs w wanted wanting

1 1 1 1 1

ways welcome wells whatever when's

1 1 1 1 1

whence whenever whereafter whereas whereby

1 1 1 1 1

wherein whereupon wherever whither whoever

1 1 1 1 1

why's willing wish wonder work

1 1 1 1 1

worked working works x y

1 1 1 1 1

year years yes young younger

1 1 1 1 1

youngest z zero

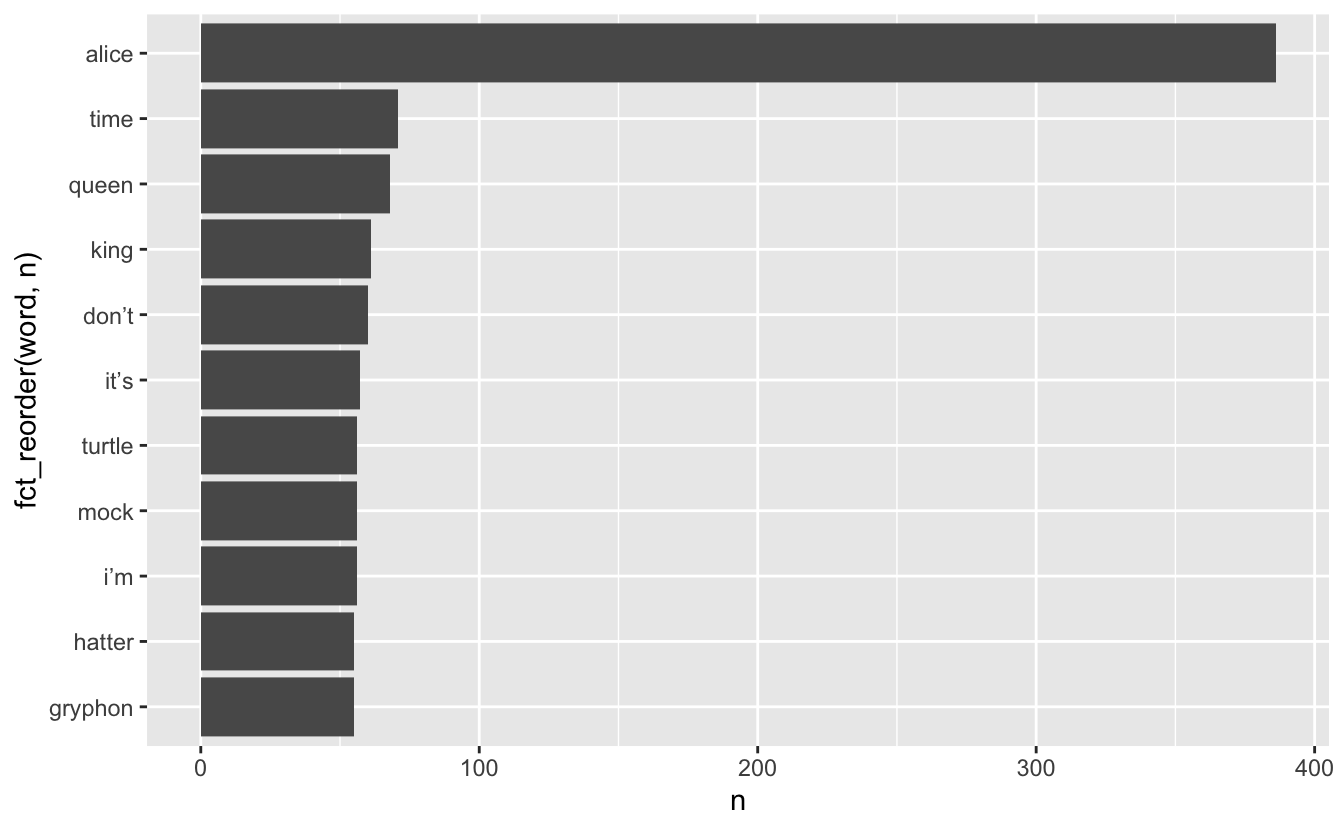

1 1 1 Removing stopwords

We can use an anti_join() to remove the tokens that also appear in the stop_words data.frame

Anti-join with same variable name

Stop words removed

Which words appear together?

ngrams are sets of n consecutive words and we can count these to see which words appear together most frequently.

- ngrams with n = 1 are called “unigrams”: “which”, “words”, “appear”, “together”

- ngrams with n = 2 are called “bigrams”: “which words”, “words appear”, “appear together”

- ngrams with n = 3 are called “trigrams”: “which words appear”, “words appear together”

Which words appear together?

We can extract bigrams using unnest_ngrams() with n = 2

# A tibble: 25,170 × 3

chapter chapter_name ngram

<int> <chr> <chr>

1 1 CHAPTER I. chapter i

2 1 CHAPTER I. down the

3 1 CHAPTER I. the rabbit

4 1 CHAPTER I. rabbit hole

5 1 CHAPTER I. <NA>

6 1 CHAPTER I. <NA>

7 1 CHAPTER I. alice was

8 1 CHAPTER I. was beginning

9 1 CHAPTER I. beginning to

10 1 CHAPTER I. to get

# ℹ 25,160 more rowsWhich words appear together?

Tallying up the bigrams still shows a lot of stop words, but it is able to pick up some common phrases:

Which words appear together?

alice |>

unnest_ngrams(ngram, text, n = 2) |>

separate(ngram, into = c("word1", "word2"), sep = " ") |>

select(word1, word2)# A tibble: 25,170 × 2

word1 word2

<chr> <chr>

1 chapter i

2 down the

3 the rabbit

4 rabbit hole

5 <NA> <NA>

6 <NA> <NA>

7 alice was

8 was beginning

9 beginning to

10 to get

# ℹ 25,160 more rowsalice |>

unnest_ngrams(ngram, text, n = 2) |>

separate(ngram, into = c("word1", "word2"), sep = " ") |>

select(word1, word2) |>

filter(word1 == "alice")# A tibble: 336 × 2

word1 word2

<chr> <chr>

1 alice was

2 alice think

3 alice started

4 alice after

5 alice had

6 alice to

7 alice had

8 alice had

9 alice soon

10 alice began

# ℹ 326 more rowsWhat about when the first word is “alice”?

alice |>

unnest_ngrams(ngram, text, n = 2) |>

separate(ngram, into = c("word1", "word2"), sep = " ") |>

select(word1, word2) |>

filter(word1 == "alice") |>

count(word2, sort = TRUE)# A tibble: 133 × 2

word2 n

<chr> <int>

1 and 18

2 was 17

3 thought 12

4 as 11

5 said 11

6 could 10

7 had 10

8 did 9

9 in 9

10 to 9

# ℹ 123 more rowsWhat about when the second word is “alice”?

alice |>

unnest_ngrams(ngram, text, n = 2) |>

separate(ngram, into = c("word1", "word2"), sep = " ") |>

select(word1, word2) |>

filter(word2 == "alice") |>

count(word1, sort = TRUE)# A tibble: 106 × 2

word1 n

<chr> <int>

1 said 112

2 thought 25

3 to 22

4 and 15

5 poor 11

6 cried 7

7 at 6

8 so 6

9 that 5

10 exclaimed 3

# ℹ 96 more rowsTF-IDF

TF: Term frequency

IDF: Inverse document frequency

TF-IDF: product of TF and IDF

TF gives weight to terms that appear a lot, IDF gives weight to terms that appears in a few documents

TF-IDF with tidytext

# A tibble: 26,687 × 3

text chapter chapter_name

<chr> <int> <chr>

1 chapter 1 CHAPTER I.

2 i 1 CHAPTER I.

3 down 1 CHAPTER I.

4 the 1 CHAPTER I.

5 rabbit 1 CHAPTER I.

6 hole 1 CHAPTER I.

7 alice 1 CHAPTER I.

8 was 1 CHAPTER I.

9 beginning 1 CHAPTER I.

10 to 1 CHAPTER I.

# ℹ 26,677 more rowsTF-IDF with tidytext

TF-IDF with tidytext

# A tibble: 7,549 × 6

text chapter n tf idf tf_idf

<chr> <int> <int> <dbl> <dbl> <dbl>

1 _alice’s 2 1 0.000471 2.48 0.00117

2 _all 12 1 0.000468 2.48 0.00116

3 _all_ 12 1 0.000468 2.48 0.00116

4 _and 9 1 0.000435 2.48 0.00108

5 _are_ 4 1 0.000375 1.10 0.000411

6 _are_ 6 1 0.000382 1.10 0.000420

7 _are_ 8 1 0.000400 1.10 0.000439

8 _are_ 9 1 0.000435 1.10 0.000478

9 _at 9 1 0.000435 2.48 0.00108

10 _before 12 1 0.000468 2.48 0.00116

# ℹ 7,539 more rowsTF-IDF with tidytext

alice |>

unnest_tokens(text, text) |>

count(text, chapter) |>

bind_tf_idf(text, chapter, n) |>

arrange(desc(tf_idf))# A tibble: 7,549 × 6

text chapter n tf idf tf_idf

<chr> <int> <int> <dbl> <dbl> <dbl>

1 dormouse 7 26 0.0112 1.79 0.0201

2 hatter 7 32 0.0138 1.39 0.0191

3 mock 10 28 0.0136 1.39 0.0189

4 turtle 10 28 0.0136 1.39 0.0189

5 gryphon 10 31 0.0151 1.10 0.0166

6 turtle 9 27 0.0117 1.39 0.0163

7 caterpillar 5 25 0.0115 1.39 0.0159

8 dance 10 13 0.00632 2.48 0.0157

9 mock 9 26 0.0113 1.39 0.0157

10 hatter 11 21 0.0110 1.39 0.0153

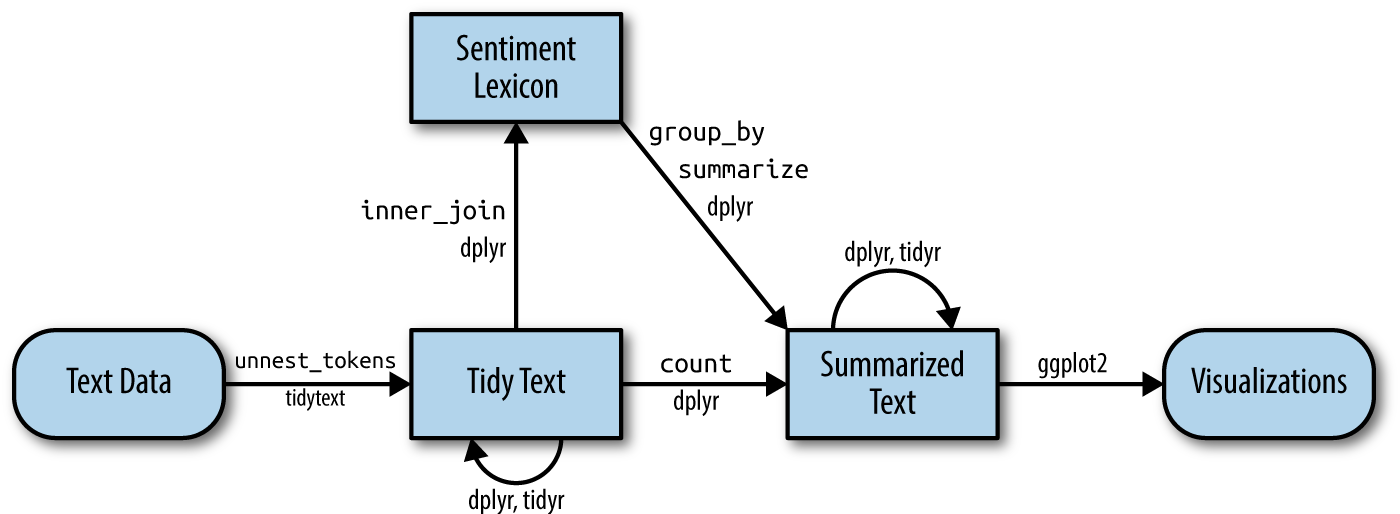

# ℹ 7,539 more rowsSentiment Analysis

Also known as “opinion mining,” sentiment analysis is a way in which we can use computers to attempt to understand the feelings conveyed by a piece of text. This generally relies on a large, human-compiled database of words with known associations such as “positive” and “negative” or specific feelings like “joy”, “surprise”, “disgust”, etc.

Sentiment Analysis

Sentiment Lexicons

The tidytext and textdata packages provide access to three different databases of words and their associated sentiments (known as “sentiment lexicons”). Obviously, none of these can be perfect, as there is no “correct” way to quantify feelings, but they all attempt to capture different elements of how a text makes you feel.

The readily available lexicons are:

afinnfrom Finn Årup Nielsenbingfrom Bing Liu and collaboratorsnrcfrom Saif Mohammad and Peter Turney

Sentiment Lexicons - bing

The bing lexicon contains a large list of words and a binary association, either “positive” or “negative”:

# A tibble: 6,786 × 2

word sentiment

<chr> <chr>

1 2-faces negative

2 abnormal negative

3 abolish negative

4 abominable negative

5 abominably negative

6 abominate negative

7 abomination negative

8 abort negative

9 aborted negative

10 aborts negative

# ℹ 6,776 more rowsSentiment Lexicons - afinn

The afinn lexicon goes slightly further, assigning words a value between -5 and 5 that represents their positivity or negativity.

Sentiment Lexicons - nrc

The nrc lexicon takes a different approach and assigns each word an associated sentiment. Some words appear more than once because they have multiple associations:

Sentiment Analysis

We can use one of these databases to analyze Alice’s Adventures in Wonderland by breaking the text down into words and combining the result with a lexicon. Let’s use bing to assign “positive” and “negative” labels to as many words as possible in the book. (Note that this time the variable created by unnest_tokens is called word, to match the column name in bing).

# A tibble: 1,409 × 4

chapter chapter_name word sentiment

<int> <chr> <chr> <chr>

1 1 CHAPTER I. tired negative

2 1 CHAPTER I. well positive

3 1 CHAPTER I. hot positive

4 1 CHAPTER I. stupid negative

5 1 CHAPTER I. pleasure positive

6 1 CHAPTER I. worth positive

7 1 CHAPTER I. trouble negative

8 1 CHAPTER I. remarkable positive

9 1 CHAPTER I. burning negative

10 1 CHAPTER I. fortunately positive

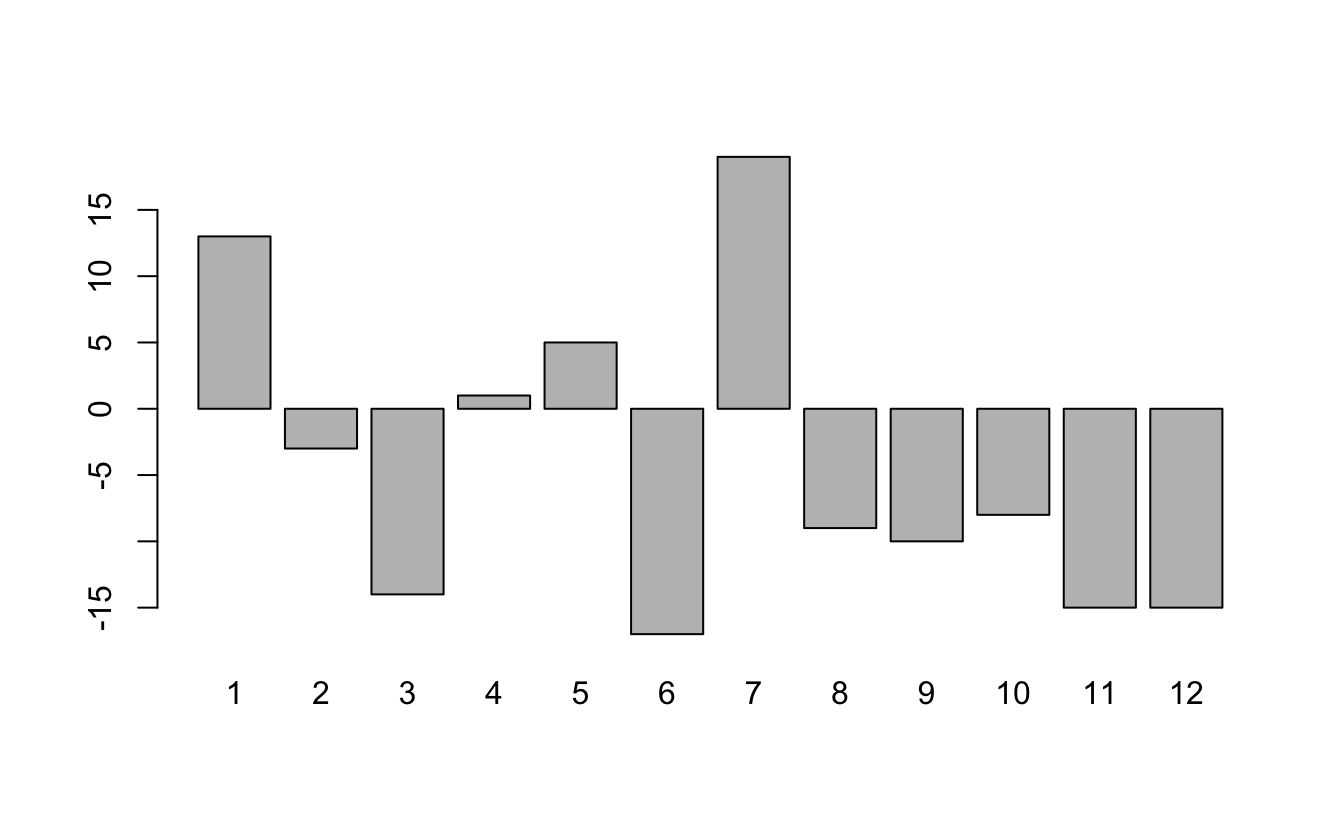

# ℹ 1,399 more rowsSentiment Analysis

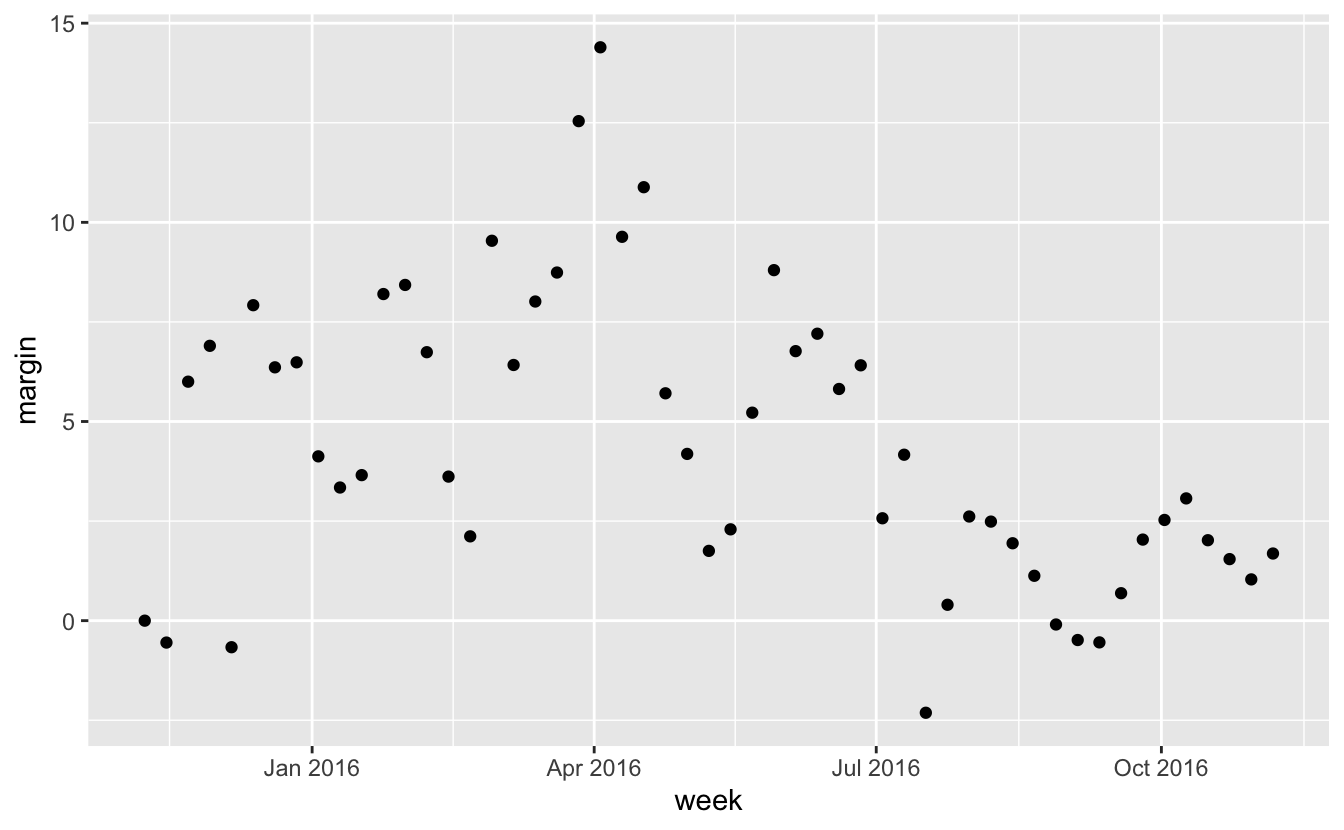

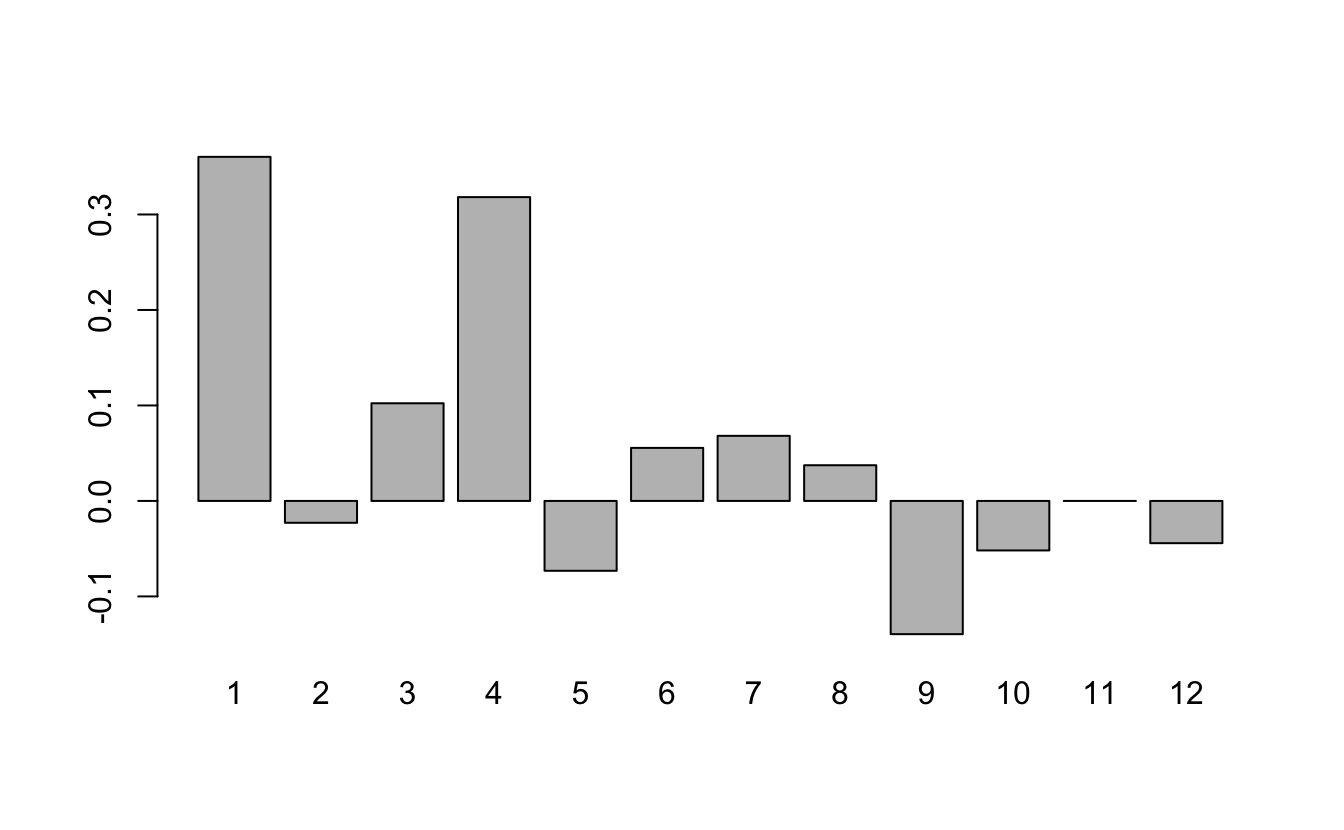

We can now group and summarize this new dataset the same as any other. For example, let’s look at the sentiment by chapter. We’ll do this by counting the number of “positive” words and subtracting the number of “negative” words:

Sentiment Analysis

Sentiment Analysis

Sentiment Analysis

Alternatively, we could use the afinn lexicon and quantify the “sentiment” of each chapter by the average of all words with numeric associations:

Sentiment Analysis

Sentiment Analysis

Similarly, we can find the most frequent sentiment association in the nrc lexicon for each chapter. Unfortunately, for all chapters, the most frequent sentiment association ends up being the rather bland “positive” or “negative”:

alice |>

unnest_tokens(word, text) |>

inner_join(get_sentiments("nrc")) |>

group_by(chapter) |>

summarise(sentiment = names(which.max(table(sentiment))))# A tibble: 12 × 2

chapter sentiment

<int> <chr>

1 1 positive

2 2 positive

3 3 positive

4 4 positive

5 5 positive

6 6 negative

7 7 positive

8 8 positive

9 9 positive

10 10 positive

11 11 positive

12 12 positive Sentiment Analysis

We’ll try to spice things up by removing “positive” and “negative” from the nrc lexicon:

Sentiment Analysis

Now we see a lot of “anticipation”:

alice |>

unnest_tokens(word, text) |>

inner_join(nrc_fun) |>

group_by(chapter) |>

summarise(sentiment = names(which.max(table(sentiment))))# A tibble: 12 × 2

chapter sentiment

<int> <chr>

1 1 anticipation

2 2 anticipation

3 3 sadness

4 4 anticipation

5 5 trust

6 6 anticipation

7 7 anticipation

8 8 anticipation

9 9 trust

10 10 joy

11 11 anticipation

12 12 trust Importing data

One of the most common ways of storing and sharing data is through electronic spreadsheets.

A spreadsheet is a file version of a data frame.

But there are many ways to store spreadsheets in files.

Importing data

To import data we need to:

Identify the file’s location.

Know what function or parsers to use.

For the second step it helps to know the file type and encoding.

File types

Files can generally be classified into two categories: text and binary.

We describe the most widely used format for storing data for both these types and learn how to identify them.

Text files

You have already worked with text files: R scripts and Quarto files, for example.

dslabs offers examples:

Text files

An advantage of text files is that we can easily “look” at them without having to purchase any kind of special software or follow complicated instructions.

Exercise:

- copy

murders.csvinto your working directory and examine it withless. - Then try the Open file RStudio tool.

- copy

Text files

Line breaks are used to separate rows and a delimiter to separate columns within a row.

The most common delimiters are comma (

,), semicolon (;), space (), and tab (\t).Different parsers are used to read these files, so we need to know what delimiter was used.

In some cases, the delimiter can be inferred from file suffix:

csv,tsv, for example.But we recommend looking at the file rather than inferring from the suffix.

Text files

- In R, you can look at any number of lines from within R using the

readLinesfunction:

Binary files

Opening image files such as jpg or png in a text editor or using

readLinesin R will not show comprehensible content: these are binary files.Unlike text files, which are designed for human readability and have standardized conventions, binary files have many formats specific to their data type.

While R’s

readBinfunction can process any binary file, interpreting the output necessitates a thorough understanding of the file’s structure.We focus on the the most prevalent binary formats for spreadsheets: Microsoft Excel xls and xlsx.

R Base Parsers

Here example of useful R base parsers:

readr Parsers

readr provides alternatives that produce tibbles:

Notice the messages produced.

readr Parsers

| Function | Format | Suffix |

|---|---|---|

| read_table | white space separated values | txt |

| read_csv | comma separated values | csv |

| read_csv2 | semicolon separated values | csv |

| read_tsv | tab separated values | tsv |

| read_delim | must define delimiter | txt |

| read_lines | similar to readLines |

any file |

readxl Parsers

For Excel files you can use the readxl package.

readxl Parsers

You can read specific sheets and see them using

Note that read_xls has a sheet argument.

readxl Parsers

| Function | Format | Suffix |

|---|---|---|

| read_excel | auto detect the format | xls, xlsx |

| read_xls | original format | xls |

| read_xlsx | new format | xlsx |

| excel_sheets | detects sheets | xls, xlsx |

data.table Parsers

The data.table package provide a very fast parser:

Note: It returns a file in data.table format which we have mentioned but not explained.

scan

The

scanfunction is the most general parser.It will read in any text file and return a vector so you are on your own coverting it to a data frame.

Because it returns a vector, you need to tell it in advance what data type to expect:

[1] "state" "abb" "region" "population" "total"

[6] "Alabama" "AL" "South" "4779736" "135" - It can also be used to read from the console. Try typing

scan(). Hit return to stop.

Encoding

Computer translates everything into 0s and 1s.

ASCII is an encoding system that assigns specific numbers to characters.

Using 7 bits, ASCII can represent \(2^7 = 128\) unique symbols, sufficient for all English keyboard characters.

However, many global languages contain characters outside ASCII’s range.

Encoding

For instance, the é in “México” isn’t in ASCII’s catalog.

To address this, broader encodings emerged.

Unicode offers variations using 8, 16, or 32 bits, known as UTF-8, UTF-16, and UTF-32.

RStudio typically uses UTF-8 as its default.

Notably, ASCII is a subset of UTF-8, meaning that if a file is ASCII-encoded, presuming it’s UTF-8 encoded won’t cause issues.

Encoding

However, there other encodings, such as ISO-8859-1 (also known as Latin-1) developed for the western European languages, Big5 for Traditional Chinese, and ISO-8859-6 for Arabic.

Take a look at this file:

Encoding

The readr parsers permit us to specify an encoding.

It also includes a function that tries to guess the encoding:

Encoding

- Once we know the encoding we can specify it through the

localeargument:

- We’ll learn more about locales later.

Encoding

- We can now see that the characters in the header were read in correctly:

# A tibble: 7 × 4

nombre f.n. estampa puntuación

<chr> <chr> <dttm> <dbl>

1 Beyoncé 04 de septiembre de 1981 2023-09-22 02:11:02 875

2 Blümchen 20 de abril de 1980 2023-09-22 03:23:05 990

3 João 10 de junio de 1931 2023-09-21 22:43:28 989

4 López 24 de julio de 1969 2023-09-22 01:06:59 887

5 Ñengo 15 de diciembre de 1981 2023-09-21 23:35:37 931

6 Plácido 24 de enero de 1941 2023-09-21 23:17:21 887

7 Thalía 26 de agosto de 1971 2023-09-21 23:08:02 830Downloading files

A common place for data to reside is on the internet.

We can download these files and then import them.

We can also read them directly from the web.

Downloading files

- If you want a local copy, you can use

download.file:

This will download the file and save it on your system with the name

murders.csv.Note You can use any name here, not necessarily

murders.csv.

Downloading files

Warning

The function download.file overwrites existing files without warning.

- Two functions that are sometimes useful when downloading data from the internet are

tempdirandtempfile.

Parsing dates and times

We have described three main types of vectors: numeric, character, and logical.

When analyzing data, we often encounter variables that are dates.

Although we can represent a date with a string, for example

November 2, 2017, once we pick a reference day, referred to as the epoch by computer programmers, they can be converted to numbers by calculating the number of days since the epoch.In R and Unix, the epoch is defined as January 1, 1970.

Parsing dates and times

If I tell you it’s November 2, 2017, you know what this means immediately.

If I tell you it’s day 17,204, you will be quite confused.

Similar problems arise with times and even more complications can appear due to time zones.

For this reason, R defines a data type just for dates and times.

The date data type

- We can see an example of the data type R uses for data here:

[1] "2016-11-03" "2016-11-01" "2016-11-02" "2016-11-04" "2016-11-03"

[6] "2016-11-03"- The dates look like strings, but they are not:

The date data type

- Look at what happens when we convert them to numbers:

- It turns them into days since the epoch.

The date data type

as.Dateconverts characters into dates.So to see that the epoch is day 0 we can type.

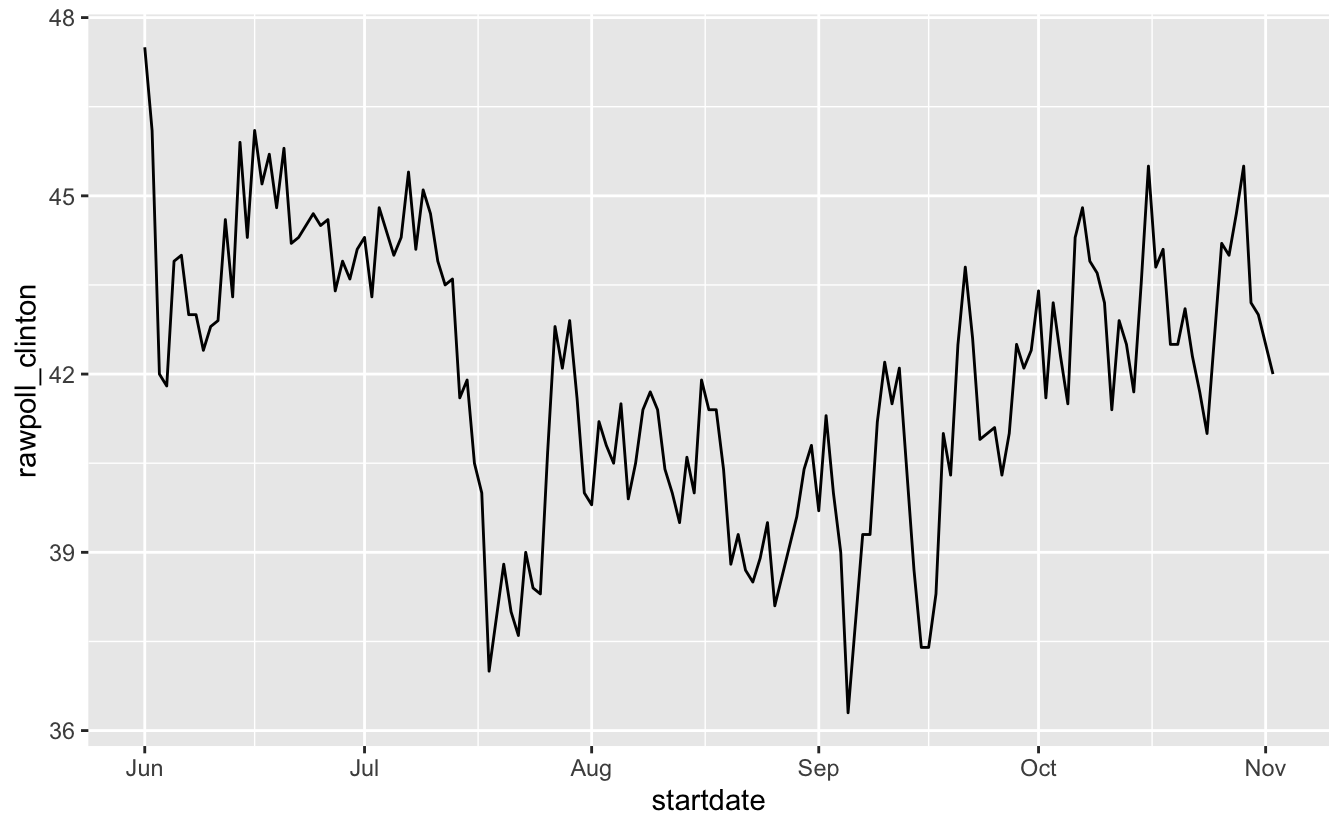

- Plotting functions, such as those in ggplot, are aware of the date format.

The date data type

- Scatterplots use the numeric representation to assign positions, but include the string in the labels:

The date data type

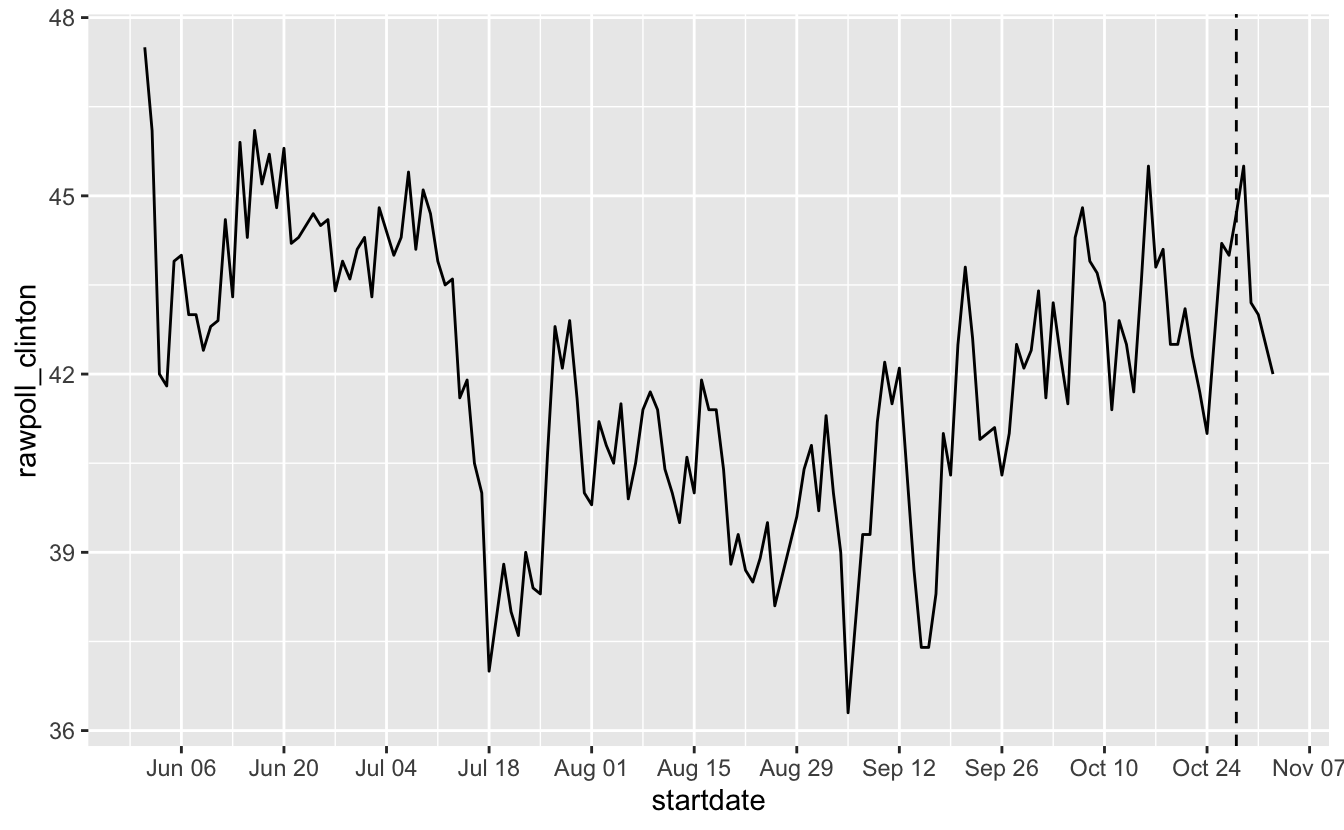

ggplot offers convenient functions to change labels:

polls_us_election_2016 |>

filter(startdate >= make_date(2016, 6, 1)) |>

filter(pollster == "Ipsos" & state == "U.S.") |>

ggplot(aes(startdate, rawpoll_clinton)) +

geom_line() +

scale_x_date(date_breaks = "2 weeks", date_labels = "%b %d") +

geom_vline(xintercept = as.Date("2016-10-28"), linetype = "dashed")

The lubridate package

- The lubridate package provides tools to work with date and times.

- We will take a random sample of dates to show some of the useful things one can do:

The lubridate package

- The functions

year,monthanddayextract those values:

# A tibble: 10 × 4

date month day year

<date> <int> <int> <int>

1 2016-05-31 5 31 2016

2 2016-08-08 8 8 2016

3 2016-08-19 8 19 2016

4 2016-09-22 9 22 2016

5 2016-09-27 9 27 2016

6 2016-10-12 10 12 2016

7 2016-10-24 10 24 2016

8 2016-10-26 10 26 2016

9 2016-10-29 10 29 2016

10 2016-10-30 10 30 2016The lubridate package

- We can also extract the month labels:

The lubridate package

Another useful set of functions are the parsers that convert strings into dates.

The function

ymdassumes the dates are in the format YYYY-MM-DD and tries to parse as well as possible.

The lubridate package

A further complication comes from the fact that dates often come in different formats in which the order of year, month, and day are different.

The preferred format is to show year (with all four digits), month (two digits), and then day, or what is called the ISO 8601.

Specifically we use YYYY-MM-DD so that if we order the string, it will be ordered by date.

You can see the function

ymdreturns them in this format.

The lubridate package

But, what if you encounter dates such as “09/01/02”? This could be September 1, 2002 or January 2, 2009 or January 9, 2002.

In these cases, examining the entire vector of dates will help you determine what format it is by process of elimination.

Once you know, you can use the many parsers provided by lubridate.

For example, if the string is:

The lubridate package

- The

ymdfunction assumes the first entry is the year, the second is the month, and the third is the day, so it converts it to:

The lubridate package

- The

mdyfunction assumes the first entry is the month, then the day, then the year:

The lubridate package

The lubridate package provides a function for every possibility.

Here is the other common one:

The lubridate package

The lubridate package is also useful for dealing with times.

In base R, you can get the current time typing

Sys.time().The lubridate package provides a slightly more advanced function,

now, that permits you to define the time zone:

The lubridate package

- You can see all the available time zones with:

[1] "Africa/Abidjan" "Africa/Accra"

[3] "Africa/Addis_Ababa" "Africa/Algiers"

[5] "Africa/Asmara" "Africa/Asmera"

[7] "Africa/Bamako" "Africa/Bangui"

[9] "Africa/Banjul" "Africa/Bissau"

[11] "Africa/Blantyre" "Africa/Brazzaville"

[13] "Africa/Bujumbura" "Africa/Cairo"

[15] "Africa/Casablanca" "Africa/Ceuta"

[17] "Africa/Conakry" "Africa/Dakar"

[19] "Africa/Dar_es_Salaam" "Africa/Djibouti"

[21] "Africa/Douala" "Africa/El_Aaiun"

[23] "Africa/Freetown" "Africa/Gaborone"

[25] "Africa/Harare" "Africa/Johannesburg"

[27] "Africa/Juba" "Africa/Kampala"

[29] "Africa/Khartoum" "Africa/Kigali"

[31] "Africa/Kinshasa" "Africa/Lagos"

[33] "Africa/Libreville" "Africa/Lome"

[35] "Africa/Luanda" "Africa/Lubumbashi"

[37] "Africa/Lusaka" "Africa/Malabo"

[39] "Africa/Maputo" "Africa/Maseru"

[41] "Africa/Mbabane" "Africa/Mogadishu"

[43] "Africa/Monrovia" "Africa/Nairobi"

[45] "Africa/Ndjamena" "Africa/Niamey"

[47] "Africa/Nouakchott" "Africa/Ouagadougou"

[49] "Africa/Porto-Novo" "Africa/Sao_Tome"

[51] "Africa/Timbuktu" "Africa/Tripoli"

[53] "Africa/Tunis" "Africa/Windhoek"

[55] "America/Adak" "America/Anchorage"

[57] "America/Anguilla" "America/Antigua"

[59] "America/Araguaina" "America/Argentina/Buenos_Aires"

[61] "America/Argentina/Catamarca" "America/Argentina/ComodRivadavia"

[63] "America/Argentina/Cordoba" "America/Argentina/Jujuy"

[65] "America/Argentina/La_Rioja" "America/Argentina/Mendoza"

[67] "America/Argentina/Rio_Gallegos" "America/Argentina/Salta"

[69] "America/Argentina/San_Juan" "America/Argentina/San_Luis"

[71] "America/Argentina/Tucuman" "America/Argentina/Ushuaia"

[73] "America/Aruba" "America/Asuncion"

[75] "America/Atikokan" "America/Atka"

[77] "America/Bahia" "America/Bahia_Banderas"

[79] "America/Barbados" "America/Belem"

[81] "America/Belize" "America/Blanc-Sablon"

[83] "America/Boa_Vista" "America/Bogota"

[85] "America/Boise" "America/Buenos_Aires"

[87] "America/Cambridge_Bay" "America/Campo_Grande"

[89] "America/Cancun" "America/Caracas"

[91] "America/Catamarca" "America/Cayenne"

[93] "America/Cayman" "America/Chicago"

[95] "America/Chihuahua" "America/Ciudad_Juarez"

[97] "America/Coral_Harbour" "America/Cordoba"

[99] "America/Costa_Rica" "America/Coyhaique"

[101] "America/Creston" "America/Cuiaba"

[103] "America/Curacao" "America/Danmarkshavn"

[105] "America/Dawson" "America/Dawson_Creek"

[107] "America/Denver" "America/Detroit"

[109] "America/Dominica" "America/Edmonton"

[111] "America/Eirunepe" "America/El_Salvador"

[113] "America/Ensenada" "America/Fort_Nelson"

[115] "America/Fort_Wayne" "America/Fortaleza"

[117] "America/Glace_Bay" "America/Godthab"

[119] "America/Goose_Bay" "America/Grand_Turk"

[121] "America/Grenada" "America/Guadeloupe"

[123] "America/Guatemala" "America/Guayaquil"

[125] "America/Guyana" "America/Halifax"

[127] "America/Havana" "America/Hermosillo"

[129] "America/Indiana/Indianapolis" "America/Indiana/Knox"

[131] "America/Indiana/Marengo" "America/Indiana/Petersburg"

[133] "America/Indiana/Tell_City" "America/Indiana/Vevay"

[135] "America/Indiana/Vincennes" "America/Indiana/Winamac"

[137] "America/Indianapolis" "America/Inuvik"

[139] "America/Iqaluit" "America/Jamaica"

[141] "America/Jujuy" "America/Juneau"

[143] "America/Kentucky/Louisville" "America/Kentucky/Monticello"

[145] "America/Knox_IN" "America/Kralendijk"

[147] "America/La_Paz" "America/Lima"

[149] "America/Los_Angeles" "America/Louisville"

[151] "America/Lower_Princes" "America/Maceio"

[153] "America/Managua" "America/Manaus"

[155] "America/Marigot" "America/Martinique"

[157] "America/Matamoros" "America/Mazatlan"

[159] "America/Mendoza" "America/Menominee"

[161] "America/Merida" "America/Metlakatla"

[163] "America/Mexico_City" "America/Miquelon"

[165] "America/Moncton" "America/Monterrey"

[167] "America/Montevideo" "America/Montreal"

[169] "America/Montserrat" "America/Nassau"

[171] "America/New_York" "America/Nipigon"

[173] "America/Nome" "America/Noronha"

[175] "America/North_Dakota/Beulah" "America/North_Dakota/Center"

[177] "America/North_Dakota/New_Salem" "America/Nuuk"

[179] "America/Ojinaga" "America/Panama"

[181] "America/Pangnirtung" "America/Paramaribo"

[183] "America/Phoenix" "America/Port_of_Spain"

[185] "America/Port-au-Prince" "America/Porto_Acre"

[187] "America/Porto_Velho" "America/Puerto_Rico"

[189] "America/Punta_Arenas" "America/Rainy_River"

[191] "America/Rankin_Inlet" "America/Recife"

[193] "America/Regina" "America/Resolute"

[195] "America/Rio_Branco" "America/Rosario"

[197] "America/Santa_Isabel" "America/Santarem"

[199] "America/Santiago" "America/Santo_Domingo"

[201] "America/Sao_Paulo" "America/Scoresbysund"

[203] "America/Shiprock" "America/Sitka"

[205] "America/St_Barthelemy" "America/St_Johns"

[207] "America/St_Kitts" "America/St_Lucia"

[209] "America/St_Thomas" "America/St_Vincent"

[211] "America/Swift_Current" "America/Tegucigalpa"

[213] "America/Thule" "America/Thunder_Bay"

[215] "America/Tijuana" "America/Toronto"

[217] "America/Tortola" "America/Vancouver"

[219] "America/Virgin" "America/Whitehorse"

[221] "America/Winnipeg" "America/Yakutat"

[223] "America/Yellowknife" "Antarctica/Casey"

[225] "Antarctica/Davis" "Antarctica/DumontDUrville"

[227] "Antarctica/Macquarie" "Antarctica/Mawson"

[229] "Antarctica/McMurdo" "Antarctica/Palmer"

[231] "Antarctica/Rothera" "Antarctica/South_Pole"

[233] "Antarctica/Syowa" "Antarctica/Troll"

[235] "Antarctica/Vostok" "Arctic/Longyearbyen"

[237] "Asia/Aden" "Asia/Almaty"

[239] "Asia/Amman" "Asia/Anadyr"

[241] "Asia/Aqtau" "Asia/Aqtobe"

[243] "Asia/Ashgabat" "Asia/Ashkhabad"

[245] "Asia/Atyrau" "Asia/Baghdad"

[247] "Asia/Bahrain" "Asia/Baku"

[249] "Asia/Bangkok" "Asia/Barnaul"

[251] "Asia/Beirut" "Asia/Bishkek"

[253] "Asia/Brunei" "Asia/Calcutta"

[255] "Asia/Chita" "Asia/Choibalsan"

[257] "Asia/Chongqing" "Asia/Chungking"

[259] "Asia/Colombo" "Asia/Dacca"

[261] "Asia/Damascus" "Asia/Dhaka"

[263] "Asia/Dili" "Asia/Dubai"

[265] "Asia/Dushanbe" "Asia/Famagusta"

[267] "Asia/Gaza" "Asia/Harbin"

[269] "Asia/Hebron" "Asia/Ho_Chi_Minh"

[271] "Asia/Hong_Kong" "Asia/Hovd"

[273] "Asia/Irkutsk" "Asia/Istanbul"

[275] "Asia/Jakarta" "Asia/Jayapura"

[277] "Asia/Jerusalem" "Asia/Kabul"

[279] "Asia/Kamchatka" "Asia/Karachi"

[281] "Asia/Kashgar" "Asia/Kathmandu"

[283] "Asia/Katmandu" "Asia/Khandyga"

[285] "Asia/Kolkata" "Asia/Krasnoyarsk"

[287] "Asia/Kuala_Lumpur" "Asia/Kuching"

[289] "Asia/Kuwait" "Asia/Macao"

[291] "Asia/Macau" "Asia/Magadan"

[293] "Asia/Makassar" "Asia/Manila"

[295] "Asia/Muscat" "Asia/Nicosia"

[297] "Asia/Novokuznetsk" "Asia/Novosibirsk"

[299] "Asia/Omsk" "Asia/Oral"

[301] "Asia/Phnom_Penh" "Asia/Pontianak"

[303] "Asia/Pyongyang" "Asia/Qatar"

[305] "Asia/Qostanay" "Asia/Qyzylorda"

[307] "Asia/Rangoon" "Asia/Riyadh"

[309] "Asia/Saigon" "Asia/Sakhalin"

[311] "Asia/Samarkand" "Asia/Seoul"

[313] "Asia/Shanghai" "Asia/Singapore"

[315] "Asia/Srednekolymsk" "Asia/Taipei"

[317] "Asia/Tashkent" "Asia/Tbilisi"

[319] "Asia/Tehran" "Asia/Tel_Aviv"

[321] "Asia/Thimbu" "Asia/Thimphu"

[323] "Asia/Tokyo" "Asia/Tomsk"

[325] "Asia/Ujung_Pandang" "Asia/Ulaanbaatar"

[327] "Asia/Ulan_Bator" "Asia/Urumqi"

[329] "Asia/Ust-Nera" "Asia/Vientiane"

[331] "Asia/Vladivostok" "Asia/Yakutsk"

[333] "Asia/Yangon" "Asia/Yekaterinburg"

[335] "Asia/Yerevan" "Atlantic/Azores"

[337] "Atlantic/Bermuda" "Atlantic/Canary"

[339] "Atlantic/Cape_Verde" "Atlantic/Faeroe"

[341] "Atlantic/Faroe" "Atlantic/Jan_Mayen"

[343] "Atlantic/Madeira" "Atlantic/Reykjavik"

[345] "Atlantic/South_Georgia" "Atlantic/St_Helena"

[347] "Atlantic/Stanley" "Australia/ACT"

[349] "Australia/Adelaide" "Australia/Brisbane"

[351] "Australia/Broken_Hill" "Australia/Canberra"

[353] "Australia/Currie" "Australia/Darwin"

[355] "Australia/Eucla" "Australia/Hobart"

[357] "Australia/LHI" "Australia/Lindeman"

[359] "Australia/Lord_Howe" "Australia/Melbourne"

[361] "Australia/North" "Australia/NSW"

[363] "Australia/Perth" "Australia/Queensland"

[365] "Australia/South" "Australia/Sydney"

[367] "Australia/Tasmania" "Australia/Victoria"

[369] "Australia/West" "Australia/Yancowinna"

[371] "Brazil/Acre" "Brazil/DeNoronha"

[373] "Brazil/East" "Brazil/West"

[375] "Canada/Atlantic" "Canada/Central"

[377] "Canada/Eastern" "Canada/Mountain"

[379] "Canada/Newfoundland" "Canada/Pacific"

[381] "Canada/Saskatchewan" "Canada/Yukon"

[383] "CET" "Chile/Continental"

[385] "Chile/EasterIsland" "CST6CDT"

[387] "Cuba" "EET"

[389] "Egypt" "Eire"

[391] "EST" "EST5EDT"

[393] "Etc/GMT" "Etc/GMT-0"

[395] "Etc/GMT-1" "Etc/GMT-10"

[397] "Etc/GMT-11" "Etc/GMT-12"

[399] "Etc/GMT-13" "Etc/GMT-14"

[401] "Etc/GMT-2" "Etc/GMT-3"

[403] "Etc/GMT-4" "Etc/GMT-5"

[405] "Etc/GMT-6" "Etc/GMT-7"

[407] "Etc/GMT-8" "Etc/GMT-9"

[409] "Etc/GMT+0" "Etc/GMT+1"

[411] "Etc/GMT+10" "Etc/GMT+11"

[413] "Etc/GMT+12" "Etc/GMT+2"

[415] "Etc/GMT+3" "Etc/GMT+4"

[417] "Etc/GMT+5" "Etc/GMT+6"

[419] "Etc/GMT+7" "Etc/GMT+8"

[421] "Etc/GMT+9" "Etc/GMT0"

[423] "Etc/Greenwich" "Etc/UCT"

[425] "Etc/Universal" "Etc/UTC"

[427] "Etc/Zulu" "Europe/Amsterdam"

[429] "Europe/Andorra" "Europe/Astrakhan"

[431] "Europe/Athens" "Europe/Belfast"

[433] "Europe/Belgrade" "Europe/Berlin"

[435] "Europe/Bratislava" "Europe/Brussels"

[437] "Europe/Bucharest" "Europe/Budapest"

[439] "Europe/Busingen" "Europe/Chisinau"

[441] "Europe/Copenhagen" "Europe/Dublin"

[443] "Europe/Gibraltar" "Europe/Guernsey"

[445] "Europe/Helsinki" "Europe/Isle_of_Man"

[447] "Europe/Istanbul" "Europe/Jersey"

[449] "Europe/Kaliningrad" "Europe/Kiev"

[451] "Europe/Kirov" "Europe/Kyiv"

[453] "Europe/Lisbon" "Europe/Ljubljana"

[455] "Europe/London" "Europe/Luxembourg"

[457] "Europe/Madrid" "Europe/Malta"

[459] "Europe/Mariehamn" "Europe/Minsk"

[461] "Europe/Monaco" "Europe/Moscow"

[463] "Europe/Nicosia" "Europe/Oslo"

[465] "Europe/Paris" "Europe/Podgorica"

[467] "Europe/Prague" "Europe/Riga"

[469] "Europe/Rome" "Europe/Samara"

[471] "Europe/San_Marino" "Europe/Sarajevo"

[473] "Europe/Saratov" "Europe/Simferopol"

[475] "Europe/Skopje" "Europe/Sofia"

[477] "Europe/Stockholm" "Europe/Tallinn"

[479] "Europe/Tirane" "Europe/Tiraspol"

[481] "Europe/Ulyanovsk" "Europe/Uzhgorod"

[483] "Europe/Vaduz" "Europe/Vatican"

[485] "Europe/Vienna" "Europe/Vilnius"

[487] "Europe/Volgograd" "Europe/Warsaw"

[489] "Europe/Zagreb" "Europe/Zaporozhye"

[491] "Europe/Zurich" "Factory"

[493] "GB" "GB-Eire"

[495] "GMT" "GMT-0"

[497] "GMT+0" "GMT0"

[499] "Greenwich" "Hongkong"

[501] "HST" "Iceland"

[503] "Indian/Antananarivo" "Indian/Chagos"

[505] "Indian/Christmas" "Indian/Cocos"

[507] "Indian/Comoro" "Indian/Kerguelen"

[509] "Indian/Mahe" "Indian/Maldives"

[511] "Indian/Mauritius" "Indian/Mayotte"

[513] "Indian/Reunion" "Iran"

[515] "Israel" "Jamaica"

[517] "Japan" "Kwajalein"

[519] "Libya" "MET"

[521] "Mexico/BajaNorte" "Mexico/BajaSur"

[523] "Mexico/General" "MST"

[525] "MST7MDT" "Navajo"

[527] "NZ" "NZ-CHAT"

[529] "Pacific/Apia" "Pacific/Auckland"

[531] "Pacific/Bougainville" "Pacific/Chatham"

[533] "Pacific/Chuuk" "Pacific/Easter"

[535] "Pacific/Efate" "Pacific/Enderbury"

[537] "Pacific/Fakaofo" "Pacific/Fiji"

[539] "Pacific/Funafuti" "Pacific/Galapagos"

[541] "Pacific/Gambier" "Pacific/Guadalcanal"

[543] "Pacific/Guam" "Pacific/Honolulu"

[545] "Pacific/Johnston" "Pacific/Kanton"

[547] "Pacific/Kiritimati" "Pacific/Kosrae"

[549] "Pacific/Kwajalein" "Pacific/Majuro"

[551] "Pacific/Marquesas" "Pacific/Midway"

[553] "Pacific/Nauru" "Pacific/Niue"

[555] "Pacific/Norfolk" "Pacific/Noumea"

[557] "Pacific/Pago_Pago" "Pacific/Palau"

[559] "Pacific/Pitcairn" "Pacific/Pohnpei"

[561] "Pacific/Ponape" "Pacific/Port_Moresby"

[563] "Pacific/Rarotonga" "Pacific/Saipan"

[565] "Pacific/Samoa" "Pacific/Tahiti"

[567] "Pacific/Tarawa" "Pacific/Tongatapu"

[569] "Pacific/Truk" "Pacific/Wake"

[571] "Pacific/Wallis" "Pacific/Yap"

[573] "Poland" "Portugal"

[575] "PRC" "PST8PDT"

[577] "ROC" "ROK"

[579] "Singapore" "Turkey"

[581] "UCT" "Universal"

[583] "US/Alaska" "US/Aleutian"

[585] "US/Arizona" "US/Central"

[587] "US/East-Indiana" "US/Eastern"

[589] "US/Hawaii" "US/Indiana-Starke"

[591] "US/Michigan" "US/Mountain"

[593] "US/Pacific" "US/Samoa"

[595] "UTC" "W-SU"

[597] "WET" "Zulu"

attr(,"Version")

[1] "2025c"The lubridate package

- We can also extract hours, minutes, and seconds:

The lubridate package

- The package also includes a function to parse strings into times as well as parsers for time objects that include dates:

The lubridate package

The

make_datefunction can be used to quickly create a date object.For example, to create an date object representing, for example, July 6, 2019 we write:

The lubridate package

We can use it to make vectors of dates.

To make a vector of January 1 for the 80s we write:

The lubridate package

Another very useful function is

round_date.It can be used to round dates to nearest year, quarter, month, week, day, hour, minutes, or seconds.

The lubridate package

Final pointers

You should be aware the there are useful functions for computing operations on time such a

difftime,time_length, andinterval.We don’t cover it here but be aware that the data.table package includes some of the same functionality as lubridate.